While higher education leaders may say they’re optimistic about AI and open to integrating it into their institutions’ operations, studying their usage of the revolutionary tool draws a more cautious picture.

An international survey by Anthology found that only about 26% use them frequently or occasionally, far below the habits of leaders elsewhere. Around half of leaders in the United Arab Emirates (54%) and Singapore (49%) reported using it.

U.S. leaders who stray from AI may do so out of fear or a lack of curiosity. The report found that only 16% believe AI will revolutionize teaching and learning, and more than one-third (35%) of university leaders worry it will create new challenges in identifying plagiarism. In addition, (19%) are concerned it will exacerbate inequity and perpetuate bias in education.

However, after working with over 350 educators predominantly at the college level, Sean Michael Morris sees this trend of cautious leaders as a result of a timeless truism: We fear what we don’t know. The antidote? Unlocking their confidence with informed training. Morris, vice president at Course Hero and instructor for its four-week AI Academy, conducts surveys to gauge educators’ thoughts on AI before and after training.

“When we first started, about a quarter of them said they didn’t feel prepared to teach with AI,” he says. “By the end of the course, 90% of them were saying that they felt somewhat or fully prepared to use AI in the classroom.”

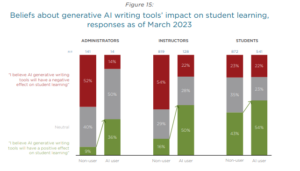

Morris’ findings reflect those from a previous survey conducted by Tyton Partners and an EDUCAUSE Quickpoll that discovered optimism over AI’s implementation in higher education grows after exposure.

More from UB: As sparks continue to fly on campuses, how can colleges uphold free speech?

Educators’ 4 pillars to implementing AI

As exposure to AI fosters deeper institutional buy-in, Morris finds that educators are already on the right track to addressing proper use when left to their own devices, especially when it comes to the level of intention being displayed by educators in implementing it effectively.

“It’s super impressive. Teachers are helping each other become much more discerning,” he says. “There’s those who are just reacting, but I’m seeing a very different kind of dialogue amongst faculty; they’re curious. They don’t know what they don’t know, and they want to find that out.”

To aid educators, especially those still staunchly conservative on using AI in the classroom, Morris addresses AI use in four aspects: AI literacy, designing AI-assisted assessment, academic integrity and teaching AI literacy to students. As deep in the weeds AI education may get, Morris relates each of these facets to traits that are positively human.

Morris’ educational framework is grounded in critical pedagogy, which is rooted in academic equity and the emancipation of marginalized groups. Critical pedagogy’s mindfulness of all kinds of student groups aligns with the Biden administration’s recent executive order that demands educational policies on AI are consistent with advancing equity and civil rights.

“Teachers have the capacity for being compassionate with their students and caring about their success. AI is just AI. It’s not a person,” he says. “It may mimic or imitate care, but it isn’t that person who will get creative for a student facing trouble.”

To uphold an institution’s academic integrity, Morris believes educators should view it as something we practice; it isn’t just a treasure they need to safeguard against unruly students.

“What does it mean to act with academic integrity? Does it mean plagiarism? Is it about a sort of fearlessness, taking risks? Is it about the investigation? Is it about honoring your own perspectives and questions?” he says. “It’s a very different perspective, when you look at it that way, and AI can become a tool for academic integrity that you can use to enhance the way you behave with academic integrity, as opposed to something that threatens it.”